When a ChatGPT blew up in the public space in December 2022, most social networks (and not only) did not deal with anything else for a few months. Since then, a few more months have passed and we’ve gone from the hot topic of Generative AI full of wild visions to more practical applications.

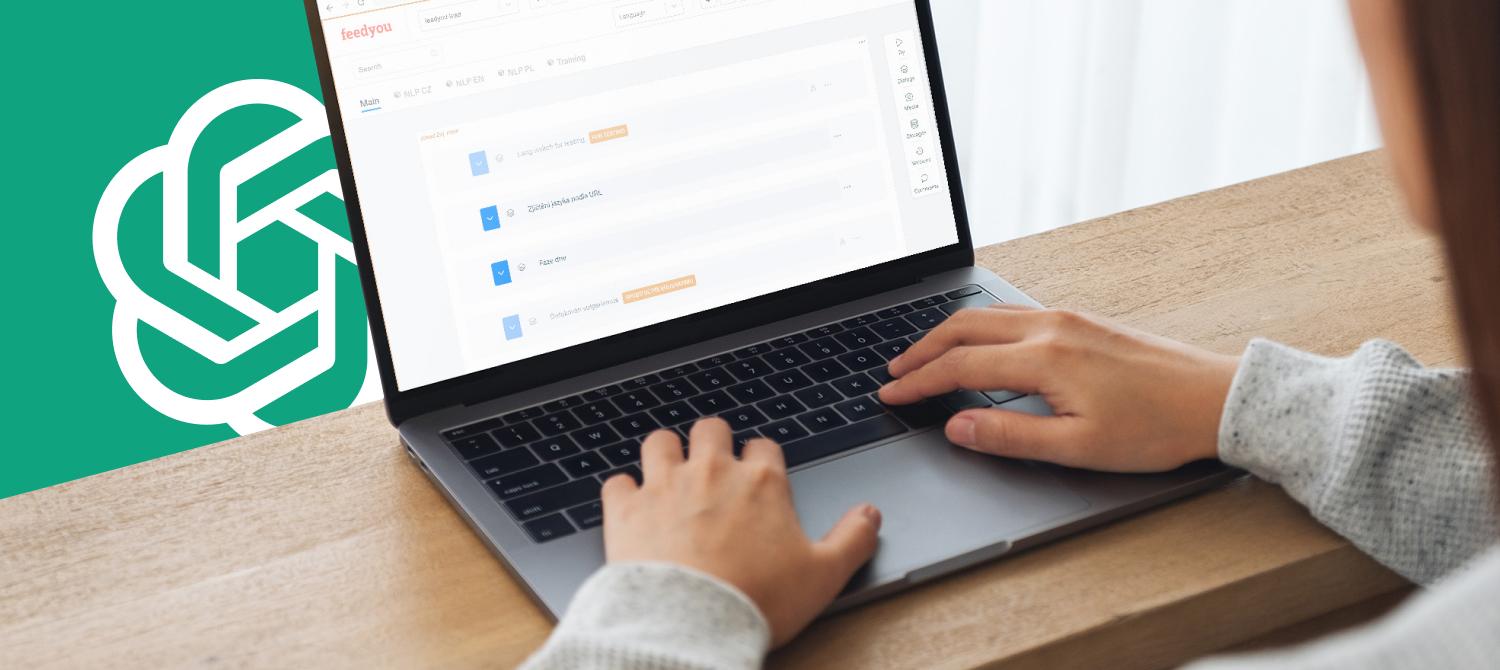

How do we use OpenAI in our Feedyou Platform today when designing and training chatbots and voicebots? Let’s take a closer look at these new capabilities.

1️⃣ A new type of Step in conversation flow design

Anywhere in the structure, we can use the new Generative AI step to easily set everything we need – whether it’s the build of the entire prompt, or basic attributes such as temperature, which determines how creative (or make-believe) the GPT model can be and how much it should stick to the specified context.

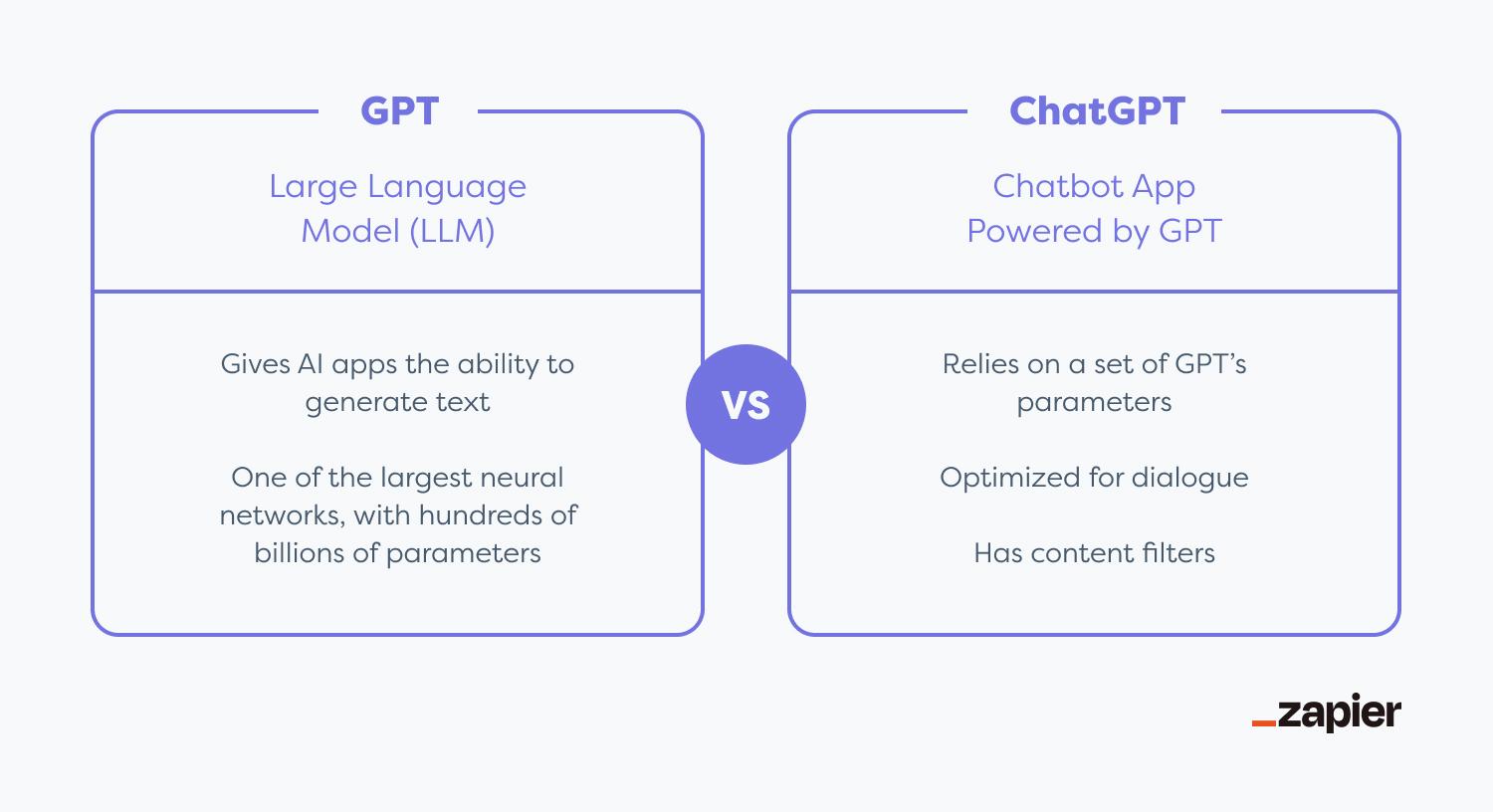

ChatGPT is the application, GPT is its brain.

First of all, it would be good to clarify the terms. We often see that companies want to connect to ChatGPT, or we see articles about how the tool uses the connection to ChatGPT. A word of caution here. Because what exactly does the OpenAI documentation say?

Simply put: ChatGPT is a chat interface built on top of the GPT models and other features that OpenAI offers.

That’s why we’re not talking about using ChatGPT, but “just” GPT models and the ecosystem around it. And it’s because of this connection that we’re able to easily implement a lot of clever conversational tricks, and we’re going to show a few of them today.

ℹ️ These are examples of what a prompt might look like for a given situation and what output we get. This can then be either just passed to the conversation or passed to a GPT function that will, for example, save the data to a reporting or external system.

Sentiment analysis based on user input

Do you want to not only get verbal feedback from the user, but also categorize it quickly and easily by sentiment for subsequent reporting?

The GPT is given the task of categorizing the inputs:

Imagine it is your job to figure out the sentiment of the answer you got. Try to classify the input into one of the following categories:

- „positive”

- „neutral”

- „negative”

And if you’re not sure, put it in the “unrecognized” category. Here are the entries and for each one try to assign one of the categories:

- I was in your store today and the cashier was amazing, she deserves a bonus for how willingly she showed me everything and how patient she was.

- Are you kidding me? After all this, you still have the nerve to ask my opinion?

- I am always satisfied with your service and this time was no different.

The output will then look like this:

- positive

- negative

- positive

Automatic response to user queries in their language

Do you have solutions and answers in only certain languages? No problem. The user can type in any language, GPT will simply recognize it and respond in the same language. For example, that it’s only able to held the conversation in English or that it can redirect him to a relevant channel where you support their language.

So we have to tell GPT to detect the user’s language under the covers:

Imagine you are a tool whose job is to recognize the language of the user. Use the ISO-639-1 acronym to identify the language. Now try to determine the language used for the following text and translate the message “Hello, I’m sorry, but I only understand Czech” into that language.

Hallo, ich möchte eine Rate bezahlen und kann dies nicht, da mein Konto leider durch Zwangsvollstreckung gesperrt ist und ich nur fragen muss, ob ich die Rate von einem anderen Konto aus bezahlen könnte

The output will then look like this:

Detected language: German

Translation: “Guten Tag, es tut mir leid, aber ich verstehe nur Tschechisch.”

Always up-to-date personalised welcome messages

Do users return to your chatbot or voicebot repeatedly and you want to offer them the best experience? How about a personalized welcome that’s always up-to-date? For example, according to the time of day, the weather or even the exchange rate of the CZE / EUR / USD / … currencies. The possibilities are almost endless.

The GPT is given the task of creating a short welcome:

Imagine you’re a chatbot, tasked with greeting users with a short message as part of a new conversation on the site. Try to use the message to let them know that it’s 11am and that the user’s name is František. How would you greet the user?

The result might look like this:

Good morning, František! Welcome to our website. How can I help you this morning?

A little trivia while the user waits

Does your bot use integrations to your internal systems and do they sometimes take time to respond? How about shortening the user’s wait with fun trivia? How about having them always relevant to what the user is waiting for? GPT can handle that too.

GPT is given the task of creating a short text:

Imagine you are a chatbot that is tasked with keeping the user busy while waiting for you to resolve their authentication in an external system. So the user is in the middle of a conversation and wants to find out how much vacation time they have left. Try to come up with a short and ideally funny interesting story that he can read while we verify him.

The result might look like this:

Did you know that, on average, people remember their holidays every 15 seconds? This is despite the fact that many of us dream of other holidays as soon as we return from the previous one. Perhaps it’s due to the desire for relaxation and adventure that a holiday offers. However short is the time until your next vacation, hopefully you will soon find out how many more vacation days you have left!

2️⃣ New NLP provider supported by Feedyou Platform

Thanks to its linguistic skills, we use GPT not only for text generation itself, but also for extending our current NLP.

Within the Feedyou Platform we currently apply two basic approaches:

NLP Intent Classification

The definition of the NLP model in our NLP Designer remains the same, so we have prepared areas of interest (intents) and we use GPT to better understand the user’s query and assign the correct intent. The query is then processed by our proprietary Feedyou NLP Engine.

The immense advantage in this case is that we are in complete control of the entire communication. With GPT we “only” increase the understanding of the user and the so-called match rate, i.e. that we answer the user’s query correctly and exactly as intended.

NLP model

In this case, we use the GPT for the entire communication, to which we initially pass the information we want it to have available. This can be information about the company, the product or even answers to frequently asked questions. Using basic settings, we only specify how much the GPT should think about and then it is up to the GPT.

This way is probably the fastest way today to build an NLP model and to give users easy access to the information you want to tell them. However, it is primarily suited to cases where the GPT can be more creative and doesn’t mind a bit of inventing.

3️⃣ Speech To Text using OpenAI Whisper

ChatGPT has received incredible attention, but alongside it, OpenAI also released a similarly powerful tool called Whisper. It’s a technology for transcribing text into speech (speech-to-text) that has significantly pushed the boundaries of what was previously possible.

We currently use Whisper primarily in situations where we expect more complex input from the user, something that the existing technologies couldn’t handle.

As a small example of a big difference, consider a short set of license plates, where we compared Google’s STT (using class tokens for even better understanding) and Whisper STT. The difference is clear.

See what it can look like in practice

A GPT-powered chatbot that easily answers questions about your business domain.

How much do I have in my account, or what is the exchange rate of the CZK/EUR? Listen to our voicebot with GPT model, designed for banking sector.

GPT-powered voicebot solution for any sales team – helping with answering FAQs and arranging meetings. On top of that, it can handle small talk.

Keep reading

The end of chatbots? Not quite. The future of automated communication? Definitely. How are we using ChatGPT?

Is ChatGPT truly so powerful? Maybe it’s just not for everyone. What’s the difference from Conversational AI and how are we using it?